About

I am learning Common Lisp, so I am experimenting and playing around with things. It started with writing a small command-line parsing library, and ended with a trip into a rabbit hole of an experiment. I do not know if someone will find it useful, perhaps not, but it is nice to write things down, it sorts of clean thoughts, at least for me.

Command line parsing in C

Typically, for parsing program options, we use something like getopt & co in C/C++, or some similar offering. But if you have lots of options and want faster parsing, you will probably use gperf. Gperf is a code generator which can construct perfect hash from a given set of keywords, i.e. our program options. For this experiment, I used keywords of the C language, rather than some imaginary options.

To construct the perfect hash, we just pass a list of keywords in a text file to gperf program:

gperf --no-strlen C23-keywordsand back we get a c23-keywords.c. The generated file contains a function to check if a keyword is in the set or not. I have manually renamed that function to "in_c23_set". The original name was "in_keyword_set" or something like that, I do not remember, it has been a few days ago.

Base case

I am talking about gperf because it is the fastest way we can do this and is faster than hash tables and certainly faster than linear probing each option in a list of if-else options. If you are aware of something faster than gperf, please let me know.

The test itself is in ckeywords.c. The code is not so interesting. It will open the very same C23-keywords file and read in keywords in an array so we can query them in a loop. There is a little "warm up loop", and then the main test loops one million times and queries 59 keywords of C in each loop, so overall 59 million lookups:

beg = clock();

for (int i = 0; i < 1000000; i++)

for (int w = 0; w < NKWDS; w++) {

k = keywords[w];

if (in_c23_set (k.string, k.length))

throaway = k.string;

}

end = clock();I have compiled with -O3, -mtune=native and -march=native, you can see the makefile. In order to convince the compiler not to throw away the computations. I do a write on each lookup and print out the last lookup. The test is very synthetic indeed, but I need something to compare the code and execution time for Emacs Lisp, Common Lisp and C.

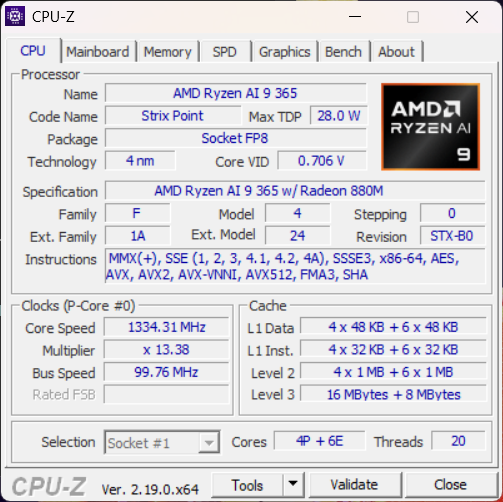

The system used:

OS Name: Microsoft Windows 11 Home OS Version: 10.0.2620

0 N/A Build 26200

Do not take my results too seriously. The CPU seems to vary the frequency depending on which core it runs. I am not sure what is going on to be honest, but I do get varying results. One thing I am sure of: when running on battery alone, the CPU is running with a reduced frequency, so I did all the tests with the power cable connected to the wall.

Running the program:

arthu@Emmi UCRT64 ~/repos/cl/keyword-test

$ ./ckeywords-static.exe

Time: 0.160000To use a C library from Lisp, we typically compile it as a dynamic loadable (shared) object. I compiled a shared library out of c23-keywords.c, just so I can compare the static versus dynamically linked program.

$ ./ckeywords-shared.exe

Time: 0.183000I would think it falls into the error margin, but I see this consistently on many runs, so I assume, calling a function from a dynamic library is just very slightly slower than a statically linked one. It was more notable when I ran on the battery.

Lisps

Lisp is not a single language. It is a family of languages. There are numerous Lisps, serving various purposes, from being embedded in tiny hardware devices like uLisp, to being used as an embeddable scripting language like Guile or ECL, to run on Java platform such as Clojure or ABCL. There is experimenting, language implementation platform, Racket, and a good application development Lisps with optimizing compilers such as SBCL or CCL.

Emacs Lisp

The most popular, at least the most used of all Lisps in current use, seems to be Emacs Lisp though, a built-in Lisp for scripting the GNU Emacs editor. GNU Emacs itself has evolved from Gosling's Emacs, which was a text editor with a pseudo-lisp, so called, "mock lisp" as its scripting language, into a full-fledged "lisp implementation". For lots of people, me included, Emacs is the entrance into the Lisp world. It was my first encounter with Lisp, and thus far still a Lisp I am most familiar with.

I am really in love with Emacs as the application. Personally, for years now, I think it would be a great CS teaching tool. Everything is moldable, it is straightforward to write a function, evaluate it, execute it, and step through it when debugging. At the same time while stepping through, one can switch to input or output buffers and see how input and output to a program are consumed or generated, all while program is executed one expression at the time.

With that convenience, it is just natural that we would like to use Emacs Lisp for writing more general programs than just extending Emacs. I am not sure if GNU Emacs is there, but we can use it to write scripts, and technically, it would be possible to use it as a framework for custom applications, though it is quite technical and involved process.

Anyway, here I would just like to see how Emacs Lisp compares to Common Lisp and how both compares to C, as well as how we can use C from both. It is just curiosity, do not take it as partisan writing for either of those three languages.

List and Hash in Emacs Lisp

First let us read the keywords into a list:

(defvar *keyword-list*

(with-temp-buffer

(insert-file-contents-literally "C23-keywords")

(cl-loop while (not (eobp))

with lines do

(push

(buffer-substring-no-properties (pos-bol) (pos-eol))

lines)

(forward-line)

finally (cl-return lines))))The simplest way to know if an element is in a set, is to check if a keyword is in a list (set) with member function:

(defun ckeywords-list-benchmark ()

(benchmark-run-compiled 1

(let (throwaway)

(garbage-collect)

(cl-loop

repeat 1000000 do

(setf throwaway nil)

(cl-loop for word in *keyword-list*

when

(cl-member word *keyword-list* :test #'string=) do

(setf throwaway word))))))As expected this is slow. It took so long that I eventually pressed C-g to cancel the computation. I have of course compiled the file with the "native compiler" (GCC), before the test.

We can do better with a hash table:

(defvar *keyword-table*

(let ((ht (make-hash-table :test #'equal)))

(dolist (keyword *keyword-list*)

(setf (gethash keyword ht) keyword))

ht))

(defun ckeywords-table-benchmark ()

(benchmark-run 1

(let (throwaway)

(garbage-collect)

(cl-loop repeat 1000000 do

(setf throwaway nil)

(cl-loop for word in *keyword-list*

when (gethash word *keyword-table*) do

(setf throwaway word)))

(print throwaway))))

;; (0.4601723 1 0.06320889999999224)I think this is the best we can do with pure Elisp, out of the box so to say. I am not familiar with any perfect hash library for Emacs, nor about some other library that might do better than the hash lookup. If you know about some, let me know.

I do not think it is bad, it is ~4x slower than the C version. But it is OK for the convenience a relatively general-purpose programming Lisp implementation offers directly from the text editor. As said, the test is very synthetic. In real-life applications, we usually do not look up millions of strings in a loop.

As a little regression, Emacs Lisp has pcase, a pattern matching library, which is built-in and even preloaded into Emacs runtime. It is good, and we can easily construct code to test all the 59 keywords. Something like this for example:

(defun match-pcase (string)

(cl-macrolet ((matchstring (s)

`(pcase ,s

,@(mapcar (lambda (w) (list w t)) *speakers*))))

(matchstring string)))It expands into a cond-form which means we do basically a linear probing on string equality. It is slower than hash table version.

In regard to this test, I would like to mention string-case, a Common Lisp library which generates tests first on the string length and then constructs a decision tree for each. It does very well in Common Lisp, which will be described later.

I have ported it to Emacs Lisp, but since we do not have a compiler and as sophisticated type inference engine, it does not matter at all since compiler can't optimize away all the functions call as SBCL can. If you compare it to the original string-case for CL, on x86, they emit some optimized assembly. SBCL emits optimized code for functions, based on the involved types, similarly as GCC can do for C functions (say strlen or memcpy). This is not something we can do in Emacs, at least not as of now. I believe they are working on the native compiler and type inference engine, but I have no idea how far they have come with that work. I see more function declarations every time I look up doc strings, but I really do not know where the work is going.

My port is quite bad too, it is probably possible to make it more efficient, but I have not invested the time. If someone is interested in giving it a run and a look, the code is in string-case.el in the repo for this blog post.

I did though try the main idea of that library: to group functions based on length and produce a cond form for each group. The worst case, when all strings are of equal length, should be the same as code produced by pcase. On average, where we have several groups of various lengths, we should be able to cut off all but one group and than do less string equality tests. I have done that, but I don't see any speedup worth talking about. I guess in practice, equal already checks the string length before comparing characters, so ending up doing some extra tests for length does not matter (checking string length in Elisp is a constant time operation).

Emacs Modules

When I was at it, I did play a bit with Emacs modules too. Emacs modules are a way for a third-party developer to call into C, either for speed or just to expose functionality to Lisp.

#define BUFFER_SIZE 250

#define _unused(var) _##var __attribute__((unused))

/* This is of course not a proper way to do things. In real code

we would check for errors and convert utf8 string to proper encoding.

For this example, we are assuming ascii text and

are not checking for errors. For proper handling, see the manual or some

other code. */

static emacs_value c23_keyword_p(emacs_env *env, ptrdiff_t _unused (nargs),

emacs_value args[], void* _unused (data)) {

static char buffer[BUFFER_SIZE];

emacs_value keyword = env->make_global_ref (env, *args);

ptrdiff_t keyword_length = 0;

if (!env->copy_string_contents(env, keyword, 0, &keyword_length))

return Qnil;

if (keyword_length > (ptrdiff_t) BUFFER_SIZE - 1)

return Qnil;

env->copy_string_contents (env, keyword, buffer, &keyword_length);

return in_c23_p (buffer, (size_t)keyword_length-1) ? Qt : Qnil;

}To call it from Elisp:

(module-load (expand-file-name "ckeywords-module.dll"))

(declare-function c23-keyword-p "c23-keywords.c")

(defun ckeywords-module-benchmark ()

(benchmark-run 1

(let (throwaway)

(garbage-collect)

(cl-loop repeat 1000000 do

(setf throwaway nil)

(cl-loop for keyword in *keyword-list*

when (c23-keyword-p keyword) do

(setf throwaway keyword)))

(print throwaway))))I get 4.1 seconds (4.112878299999999 1 0.032796099999999884).

I am not an expert in writing Emacs modules, but I think it will do. The code is in ckeywords-module.c.

The slowness is not the code, but the strategy. Calling low-level functions like this is not the best strategy, since we are paying the cost for packing and unpacking Emacs pointers 59 million times. Obviously, a better way of using a module is to write the test completely in C and export just the function to call the test, i.e. a high-level function call. In other words, making lots of calls to module API adds overhead.

If you check the code in ckeywords-module.c, c23_keywords_test does exactly that. There we can measure just the double loop, as we did in a pure C program in ckeywords.c:

beg = clock();

for (int i = 0; i < 1000000; i++)

for (int w = 0; w < NKWDS; w++) {

k = keywords[w];

if (in_c23_set (k.string, k.length))

temp = k.string;

}

end = clock();The result is hovering about ~0.08 ~ 0.1 seconds, same as for the statically linked C program. The conclusion I draw from this experiment is that Emacs modules are good for exposing the functionality to Emacs Lisp. However, when it comes to execution speed, modules can be used if we do not do too many interactions with Emacs runtime. Otherwise, the overhead for packing and unpacking data to and from Emacs runtime adds up noticeably. In other words, we can get the raw C speed for the code that runs relatively independent from Emacs core, at in hot loops.

Common Lisp version

I started this while writing a library for Common Lisp, so how does Common Lisp fare in the same test? SBCL is an optimizing compiler, and if I try a simple list version as in above, we can see some difference:

(eval-when (:compile-toplevel)

(defvar *keyword-list*

(with-open-file (stream "C23-keywords")

(loop for line = (read-line stream nil)

while line

collect (coerce line 'simple-base-string)))))

(defun ckeywords-benchmark ()

(let (throwaway)

(sb-ext:gc :full t)

(time

(loop repeat 1000000 do

(setf throwaway nil)

(loop for word in *keyword-list*

when (member word *keyword-list* :test #'string=) do

(setf throwaway word))))

(print throwaway)))As said, it is not fast, but at least I did not have to interrupt it:

CKEYWORDS-LIST> (ckeywords-table-benchmark)

Evaluation took:

11.592 seconds of real time

11.593750 seconds of total run time (11.578125 user, 0.015625 system)

100.02% CPU

23,141,381,500 processor cycles

0 bytes consedSimilarly as in Emacs, a hash table instead of a list gives a noticeable performance:

CKEYWORDS-TESTS> (ckeywords-table-benchmark)

Evaluation took:

0.895 seconds of real time

0.890625 seconds of total run time (0.875000 user, 0.015625 system)

99.55% CPU

1,787,211,040 processor cycles

0 bytes consedSurprisingly, it is not as fast as Emacs Lisp. Nevertheless, there is a drastic difference from using a list. From 12 seconds, to less than 1 second is quite a difference just by changing the data structure for the lookup.

Those two tests above are just standard Common Lisp. It is possible to do better without adding much extra work, by using a better data structure. In a discussion on Reddit, someone pointed me to string-case by Paul Khuong. String-case is not a perfect hash generator, but it generates a state machine or a decision-tree as they call it.

It was not much harder to use it than a list or a hash table:

(defun match-keyword-p (keyword)

(declare (type simple-base-string keyword))

(macrolet ((matchstring (s)

`(string-case (,s)

,@(mapcar (lambda (w)

(declare (type simple-base-string w))

`(,w t))

*keyword-list*))))

(matchstring keyword)))

(defun ckeywords-string-case-benchmark ()

(let (throwaway)

(sb-ext:gc :full t)

(time

(loop repeat 1000000 do

(setf throwaway nil)

(loop for keyword in *keyword-list*

when (match-keyword-p keyword) do

(setf throwaway keyword))))

(print throwaway)))Finally, the result

CKEYWORDS-LIST> (ckeywords-string-case-benchmark)

Evaluation took:

0.204 seconds of real time

0.203125 seconds of total run time (0.2

03125 user, 0.000000 system)

99.51% CPU

408,907,180 processor cycles

0 bytes consedFinally, if I inline the match-keyword-p, (declaim (inline match-keyword-p)), I get the same speed as with C version, unless SBCL has optimized away my code :):

CKEYWORDS-LIST> (ckeywords-string-case-benchmark)

Evaluation took:

0.080 seconds of real time

0.093750 seconds of total run time (0.093750 user, 0.000000 system)

109.30% CPU

171,971,140 processor cycles

0 bytes consedThere we have it: with speed of C in Lisp, by a n00b without any expert knowledge, by the power of a good Lisp compiler and a relatively simple library written by an expert (were the real work happened). SBCL version used for this is 2.6.3.

As said, this is a completely synthetic and unrealistic benchmark but I needed something I could measure.

Using C in SBCL

With SBCL, we can relatively simply call C functions from Lisp:

(load-shared-object "ckeywords.dll")

(define-alien-type sizet (unsigned 64))

(define-alien-routine ("in_c23_set" c23-keyword-p) c-string

(kwd c-string)

(len sizet))The point here is that we don't need to use any speical API to make a loadable SBCL module, we can just use an ordinary shared library as compiled for any C program, thanks to the built-in foreign-function interface (FFI).

A benchmark based on the wrapper:

(defun ckeywords-ffi-benchmark ()

(let (throwaway)

(sb-ext:gc :full t)

(time

(loop repeat 1000000 do

(setf throwaway nil)

(loop for word in *keyword-list* do

(let ((w word))

(declare (type simple-base-string w))

(c23-keyword-p w (length w))

(setf throwaway word)))))

(print throwaway)))I do get a warning about SAP to pointer coercion, which I am not sure how, and if possible, to optimize away. The performance seems to be similar to that of Emacs module. Lots of consing (memory allocations) occurring too:

CKEYWORDS-TESTS> (ckeywords-ffi-benchmark)

Evaluation took:

3.912 seconds of real time

3.906250 seconds of total run time (3.734375 user, 0.171875 system)

[ Real times consist of 0.056 seconds GC time, and 3.856 seconds non-GC time. ]

[ Run times consist of 0.062 seconds GC time, and 3.845 seconds non-GC time. ]

99.85% CPU

7,810,786,720 processor cycles

3,791,983,408 bytes consedHere, we can eliminate some of the consing. SBCL automatically converts strings between C and Lisp, for our convenience. In C, we get just a pointer back, but SBCL seem to see that pointer and copies the string and generates a Lisp string as the return value. We can rewrite the function to return a boolean value instead, check in_c23_p function. There is still an overhead calling C function, but the timing is much better when consing is gone:

CKEYWORDS-TESTS> (ckeywords-ffi-benchmark)

Evaluation took:

1.876 seconds of real time

1.875000 seconds of total run time (1.859375 user, 0.015625 system)

99.95% CPU

3,745,222,160 processor cycles

0 bytes consedWe can also inline the call and get some more performance, but the overhead of FFI is still there:

CKEYWORDS-TESTS> (ckeywords-ffi-benchmark)

Evaluation took:

1.305 seconds of real time

1.312500 seconds of total run time (1.312500 user, 0.000000 system)

100.54% CPU

2,605,504,699 processor cycles

0 bytes consedObviously, making 59 million ffi calls is not the best strategy, just as it was not in the case of Emacs module. A better strategy is to construct the test in C, and call that instead, and pay the overhead of ffi call only once. The same strategy as in the case of C module. I have not done that for this test, but at this point I do not think it is really interesting, the result would be obviously the same as with Emacs module or standalone C application since we would be again measuring just the double loop.

The Conclusion

The point here is that pure Lisp code was not so much slower than pure C code, given that one understands how to write code for SBCL and has some expert knowledge. At least in this case the performance is on par. Another point was that a compiler that understands Lisp is important. GCC, as Emacs native compiler cannot really produce efficient code out of Lisp functions. One would need to teach it how to produce optimized code for Lisp functions just as it does for C functions.

Finally, the convenience of coding in Lisp, is not even remotely comparable to coding C, especially when we have to handle strings in pure C. It literally took a few minutes to write Lisp code. Personally, writing Lisp feels more like typing pseudo-code than real code. At least compare how code for C module in Emacs looks like and how similar code in Lisp looks like. Of course, that is a very subjective point, and I have to admit that it did not come overnight either.

This little post might appear as if I am comparing these three languages to each other to find which one is best, but that is not what I mean. I do not think those three languages are really comparable to each other in that way. In other words I did not mean to compare them in a bad way, to diminish one compared to the other. I think all three serve different purposes and play in different leagues and should be used accordingly.

The repo

All the code, and some other not described here is in a public repo. It is just small tests, not much care is given to make something reusable, so I don't recommend using it for anything.

If you find errors in my measurements, or have suggestions, especially if you make string-case for Emacs Lisp go faster, please let me know.

A Last Word

I hope you find it useful or entertaining at least. The entire article and code were written by a human, in all its entirety, no AI was used for writing the code nor this text, for the better or worse :).

Also, I am aware of command-line parsing libraries for Common Lisp I can use. Clon seems to be an insanely well done one and is my current favorite, and yes, I have seen Clingon and some others. But as usual, I had an itch to scratch. I might write about it another day though.